Simulating the nonlinear optical physics that underlies ultrafast laser systems is computationally demanding — a practical bottleneck in settings that require rapid feedback. A new study by researchers at Stanford University, University of California, Los Angeles (UCLA), and SLAC National Accelerator Laboratory introduces a deep learning surrogate that delivers orders-of-magnitude acceleration over conventional simulation methods, while maintaining high fidelity across a challenging range of pulse shapes.

The work centers on second-order nonlinear optics, or χ² processes, in which light waves exchange energy inside specially engineered crystals to generate new frequencies and tailored pulse shapes. In particle accelerator facilities, these processes play a key role. At SLAC’s upgraded Linac Coherent Light Source (LCLS-II), infrared laser pulses are first to green light and then to ultraviolet (UV). The UV pulse strikes a cathode to liberate an electron bunch that is subsequently accelerated and modulated to produce intense X-ray pulses. The temporal shape of the UV pulse directly influences the properties of that electron bunch — and ultimately the quality of the X-rays available for science. A surrogate model for the nonlinear χ² frequency conversion step at the heart of this process is reported in Advanced Photonics.

The standard simulation approach — numerically solving the nonlinear Schrödinger equation using the split-step Fourier method (SSFM), which alternates between time- and frequency-domain operations at each propagation step — is accurate but slow, accounting for roughly 95 percent of total runtime in the full laser simulation. Taking inspiration from prior work applying recurrent neural networks to pulse propagation in fiber optics, the team developed an LSTM (long short-term memory) surrogate tailored to the more generalized, multifield χ² setting. Noncollinear SFG, involving three simultaneously evolving coupled optical fields across a wide range of pulse shapes, serves as a rigorous and broadly relevant testbed. A key design choice was to operate entirely in a compressed frequency-domain representation, avoiding the repeated domain transformations that make SSFM costly.

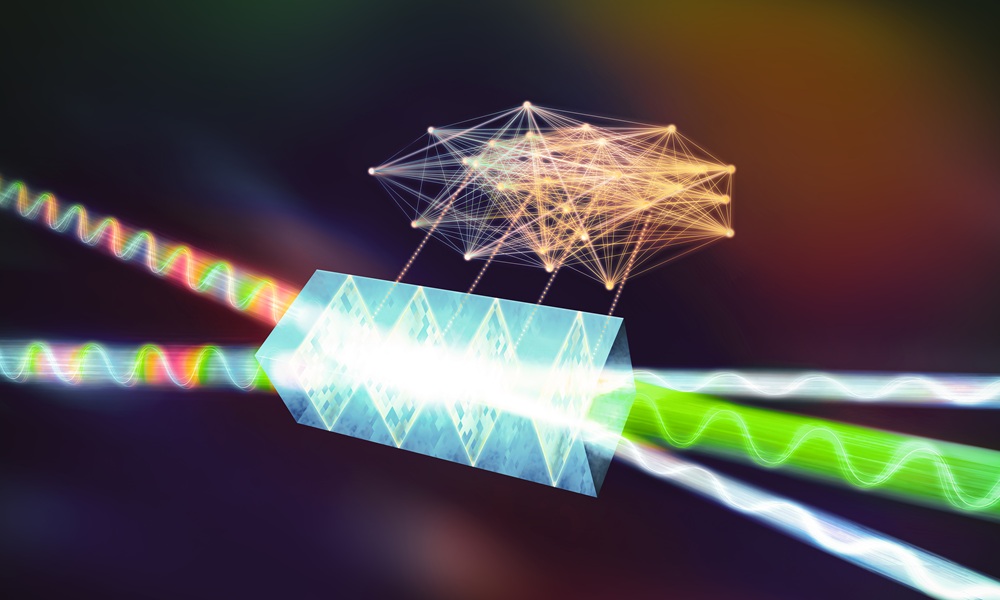

(a) Schematic of the noncollinear SFG process, in which three coupled optical fields (A₁, A₂, A₃) propagate through 100 discretized crystal slices, with the LSTM surrogate replacing the conventional SSFM solver at each step. (b) Architecture of the LSTM network, showing the recurrent layers and fully connected output layers. Credit: Hirschman et al., doi 10.1117/1.AP.8.3.036004

The surrogate accurately reconstructs temporal and spectral profiles across a wide range of pulse shaping conditions, including cases with pronounced spectral holes and strong phase modulation. Running with batched GPU inference, average per-instance simulation time falls to a few milliseconds — orders of magnitude faster than conventional methods. Notably, the model appears to capture the global coupled dynamics of the interaction: when the primary SFG output is predicted well, the secondary fields tend to match closely too.

A broader goal of this work is the integration of such surrogates with experimental laser systems. The modular framework — in which individual physical processes are each represented by a trained surrogate block — points toward predictive models coupled directly to running experiments.

Learn more about this advance in the following video:

Looking ahead, linking fast machine‑learning surrogates directly with experiments opens the door to applications such as full digital twin development, adaptive control, and tighter integration with downstream diagnostics across a range of laser-driven facilities.

For details, see the original Gold Open Access article by Hirschman et al., “Deep learning-assisted modeling for χ(2) nonlinear optics,” Adv. Photon. 8(3) 036004 (2026), doi: 10.1117/1.AP.8.3.036004